Architecture firms can show you the final render but not the chain of models, training data, and human decisions that produced it. As AI-generated facade concepts move from ComfyUI prototypes to poured concrete, that missing chain—provenance—is becoming a liability, compliance, and intellectual property problem all at once.

The Provenance Gap in the Built Environment

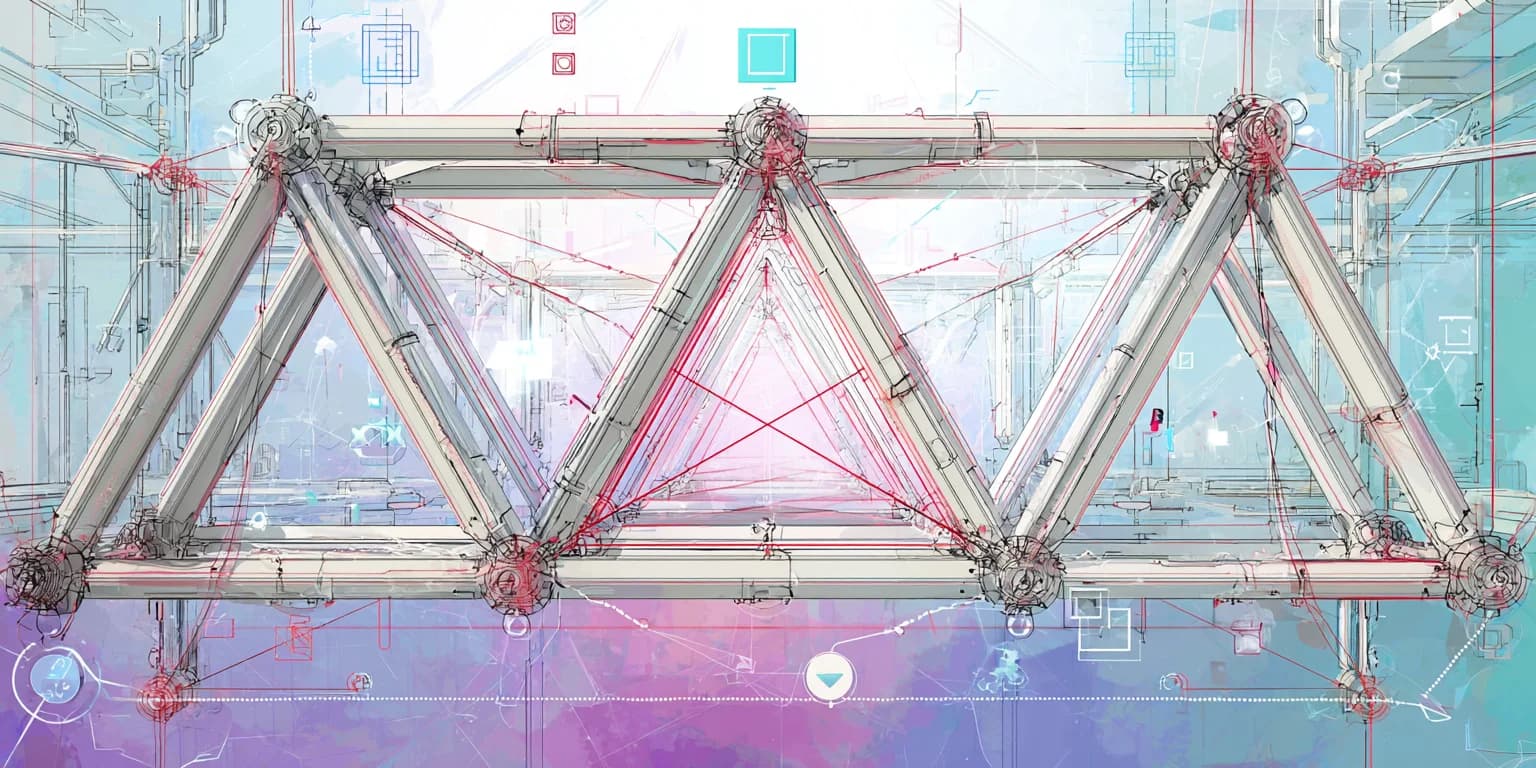

A generative design workflow in a modern AEC firm might touch three or more AI tools before a concept reaches a client's screen. A designer prompts Midjourney for massing studies, refines structural options in a parametric model informed by machine learning, and composites the result in Photoshop's generative fill. Each step transforms the asset. None of them, by default, record why a decision was made, what training data informed it, or where one tool's output ended and another's began.

This is the provenance gap: the distance between a finished deliverable and its generative history.

For most creative industries, that gap is an operational nuisance—teams lose 3–6 hours weekly searching for the right version of the right file. In architecture, the gap carries structural consequences. Buildings are regulated artifacts. They require permits, carry professional liability, and exist for decades. When a municipality asks “Was AI used in this design?” and the honest answer is “Yes, in ways we can no longer fully reconstruct,” the firm has a problem that no amount of talent can paper over.

Why AEC Is Uniquely Exposed

I think about this exposure in three parts: liability, intellectual property, and regulatory momentum.

Liability. Licensed architects stamp drawings. That stamp is a legal assertion of professional responsibility. When generative AI contributes to a design—even at the conceptual stage—the question of what the architect actually reviewed, modified, and approved becomes critical. If a facade system underperforms and the original concept was AI-generated, opposing counsel will ask for the provenance trail. Today, most firms cannot produce one.

Intellectual property. Copyright law in the United States currently does not extend protection to purely AI-generated works. The U.S. Copyright Office's January 2025 copyrightability report clarified that prompts alone do not provide sufficient human control to make users the authors of AI output. For AEC firms, this means the degree of human authorship in an AI-assisted design directly affects whether that design is protectable. Without provenance records showing where human creative decisions shaped the output, firms risk discovering their most distinctive work is uncopyrightable.

Regulatory momentum. The EU AI Act classifies systems that affect safety-critical infrastructure under its high-risk category, with penalties reaching up to €35 million or 7% of global turnover for the most serious violations. California's SB 942 imposes fines of $5,000 per day for AI disclosure failures. AEC firms operating across jurisdictions will increasingly face disclosure obligations that demand exactly the kind of asset lineage they are not currently capturing.

From Prototype to Pour: Where Provenance Breaks Down

Let's trace a realistic workflow to see where the trail goes cold.

1. Concept generation. A designer uses a diffusion model to explore facade patterns. The prompt, model version, seed, and guidance parameters constitute the generative origin—but they're typically stored nowhere persistent. They live in a browser tab, a Discord thread, or a terminal window that closes at the end of the day.

2. Refinement. The output moves into Rhino or Revit. A computational designer modifies geometry, adjusts for structural loads, adapts to site constraints. These modifications represent significant human authorship, but the link to the AI-generated starting point is severed the moment someone drags a PNG into a new project folder.

3. Client presentation. The design is composited, annotated, and rendered. Metadata from earlier stages—if it existed—is stripped during export. The client sees a polished deliverable with no visible history.

4. Construction documentation. Months later, the concept becomes a set of construction drawings. The original generative context is now two or three software environments and dozens of human decisions in the past. Reconstructing it would require digital archaeology—the kind that costs creative teams an estimated 25% of their working time across industries.

Each handoff is a provenance break. And in AEC, every provenance break is a potential liability gap.

What Municipalities and Clients Are Starting to Ask

The compliance landscape for AI in the built environment is still forming, but the direction is clear. Several developments are converging:

- Municipal disclosure requirements. Cities including New York and San Francisco have expanded AI transparency requirements in public-facing services. It is a short step from “disclose AI in hiring decisions” to “disclose AI in publicly funded building design.” Some municipal RFPs already include AI-use disclosure clauses.

- Client due diligence. Corporate real estate clients, particularly those subject to ESG reporting frameworks, are beginning to ask design firms about AI use in their workflows—not to prohibit it, but to document it. They need to know what they're approving.

- Insurance implications. Professional liability insurers are watching generative AI closely. Firms that can demonstrate a governed, traceable AI workflow may find themselves in a different risk category than those operating without provenance records.

The firms that will navigate this well are not the ones avoiding AI. They are the ones building the infrastructure to show their work.

What a Provenance-Ready AEC Workflow Looks Like

A provenance-ready workflow does not mean slowing down generative exploration. It means capturing lineage as a byproduct of creation rather than reconstructing it after the fact.

The requirements break down into four capabilities:

- Automatic origin capture. Every AI-generated asset enters the system with its generative metadata intact—model, version, prompt, parameters, timestamp. This happens at creation, not during a quarterly audit.

- Lineage across tools. When an asset moves from Midjourney to Rhino to Revit to a client-facing render, the relationships between versions are preserved. The final deliverable links back through every transformation to its origin.

- Human authorship documentation. The modifications, approvals, and creative decisions made by licensed professionals are recorded alongside the AI-generated components. This is what establishes copyrightability and professional accountability.

- Queryable compliance records. When a municipality, client, or insurer asks “Was AI used in this design?”, the firm can answer with specificity: which components, which tools, which humans reviewed and modified them, and when.

This is infrastructure, not a checklist. And that matters because the volume of AI-generated design assets is growing at 54–57% year over year. Manual tracking at that scale is not a workflow—it is a wish.

The Competitive Advantage of Showing Your Work

There's a tendency to frame provenance as a burden—one more compliance requirement layered onto an already complex delivery process. I think the opposite is true.

Firms that can demonstrate a clear, auditable chain from generative concept to built structure will differentiate themselves in three ways:

- In procurement. As AI disclosure requirements enter RFPs, provenance-ready firms will qualify for projects that provenance-blind firms cannot.

- In litigation defense. When disputes arise—and in construction, they always arise—documented lineage is a shield, not a liability.

- In design culture. Teams that can find, reuse, and build on previous AI explorations work faster and more creatively than teams performing digital archaeology through unsorted project folders.

The question is not whether AEC firms will need provenance infrastructure. The question is whether they build it before or after the first major liability claim forces the industry's hand.

Key Takeaways

- 1.AI provenance in AEC is a liability issue, not just an operational one. Licensed architects bear professional responsibility for designs that may originate in generative AI tools without traceable lineage.

- 2.Copyright protection for AI-assisted designs depends on documented human authorship. The U.S. Copyright Office confirmed that prompts alone don't establish authorship. Without provenance records, firms risk losing IP protection on their most distinctive work.

- 3.Regulatory momentum is accelerating. The EU AI Act, California SB 942, and emerging municipal disclosure requirements are creating a compliance landscape that demands asset-level lineage tracking.

- 4.Provenance breaks happen at every handoff. From diffusion model to parametric tool to construction document, each software transition severs the generative trail unless infrastructure captures it automatically.

- 5.Showing your work is a competitive advantage. Firms with provenance infrastructure will win procurement, defend against litigation, and enable faster creative reuse—all before compliance mandates force the issue.